Australian aid investments managed by DFAT are rated on a scale of 1 to 6 in relation to a number of criteria. Scores of 4 or higher are required for the investment to be regarded as satisfactory.

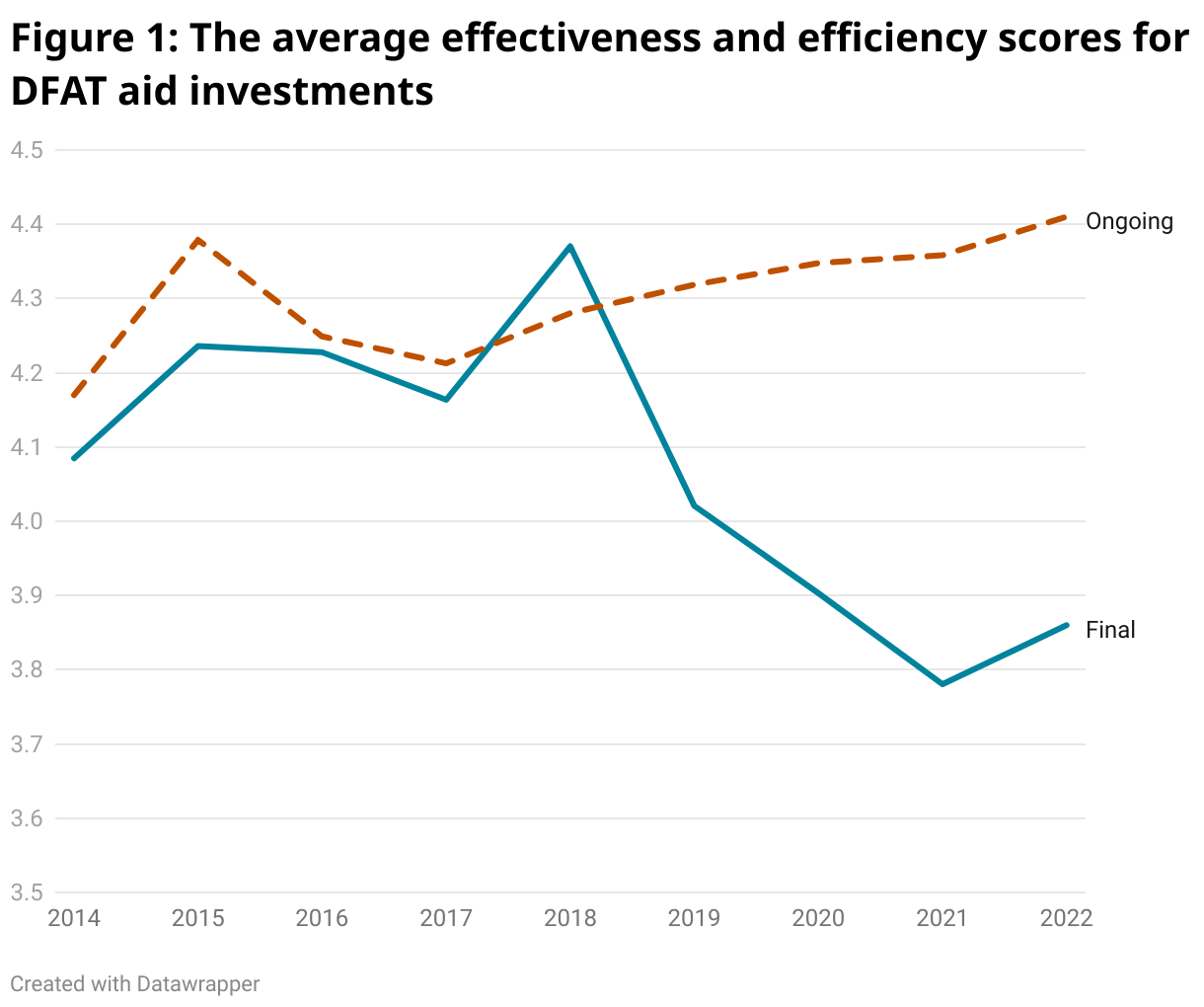

DFAT rates both ongoing and completed investments, but rightly now gives primacy to the latter in its overall assessments of aid performance. The figure below, from our recently released report, shows average efficiency and effectiveness scores for both ongoing and completed investments from 2014 to 2022. Final ratings (for completed investments) show a sharp decline in performance, whereas ongoing ratings show an improvement. In 2021 and 2022, only three in five completed investments were rated satisfactory or better on both effectiveness and efficiency, whereas nine in ten ongoing investments were thus rated.

Why the worsening performance when we look at completed investments, and why the large and growing disconnect between the assessments of ongoing and completed investments?

You can read our report for a full explanation, but the key factor identified by the report’s regression and decomposition analysis is a shift in 2019 to a more independent rating system for completed investments. The “optimism bias” that afflicts self-awarded ratings has been reduced for final ratings because, since 2019, the rating of completed investments has been the responsibility not of the investment’s managers but of a central unit within DFAT, that undertake its review with the assistance of external consultants. Ongoing ratings, however, remain the responsibility of investment managers. As a result, the gap between the rating of ongoing and completed investments has quadrupled.

The shift to a more independent rating system and the focus on final ratings should be preserved. However, the current system in which a large share of investments is rated as unsatisfactory, but the aid program is nevertheless reported to be “on track” is unsustainable. We conclude our report with five additional recommendations.

First, DFAT needs to take its own performance assessments more seriously, and reverse some of the recent decline. Since 2020, the average completed investment rating has been less than satisfactory for both effectiveness and efficiency. Of course, not all projects will succeed, but this is worryingly low. A review is needed, followed by change.

Second, DFAT should guard against solving the problem by grade inflation. After all, whether a project is regarded as satisfactory is, in the end, often a matter of judgement. We recommend against adopting a performance target, and in favour of retaining consultant review and central responsibility for final ratings, as part of a serious validation process to ensure confidence in the accuracy of project assessments.

Third, a communication effort is needed. Reporting a high share of unsatisfactory projects without explanation is a great vulnerability. In 2021 and 2022, 36 aid investments worth about one billion dollars were rated as unsatisfactory. This includes ministerial flagships and other large and critical investments. No explanation has been provided for why these investments are regarded as failures – several still have glossy websites. Rather than hoping that no one will notice, it would be better for DFAT to explain what went wrong with these interventions, and what it is doing to improve the health of the portfolio.

Fourth, ongoing ratings are meaningless due to the large disconnect with final ratings, and the performance assessment system for ongoing investments should be overhauled. More than half the unsatisfactory completed investments rated in 2021 and 2022 were rated as satisfactory in their final ongoing investment rating. The improvement in ongoing ratings during the pandemic further stretches credulity.

Fifth and finally, the Office of Development Effectiveness (ODE) and the Independent Evaluation Committee (IEC) should be re-established. The problems identified in our report have largely occurred since the abolition of the ODE, DFAT’s evaluation body, and its oversight body, the IEC. One of the roles of the ODE, prior to its abolition in 2020, was to assess “DFAT’s internal performance management systems”. This was a role distinct from that of the performance branch, which is responsible for managing the performance system, as against assessing it.

In short, the trends revealed in this report, and the lack of any response to them to date by DFAT, make a compelling case for the reintroduction of the ODE and IEC as DFAT’s aid effectiveness champion and watchdog.

Download the full Devpol report, ‘Why are two-in-five Australian aid investments rated unsatisfactory on completion? An investigation into recent trends in Australian aid performance assessments’.

Disclosure

This research was undertaken with the support of the Bill & Melinda Gates Foundation. The views are those of the authors only.

Thanks Dev Policy for this interesting piece. Like Ed and Denis my immediate thoughts go to the integration of AusAID with DFAT, the corresponding decline in aid capability, and the increasing numbers of projects that would have commenced and completed since 2015. Further analysis around this would be very welcome.

Meanwhile just a word of caution in terms of averaging these ratings – as this is ordinal data it is my understanding it shouldn’t be averaged (as there is not an equal gap between the ratings).

Thanks Jo,

Just on the technical point, at some point I will re-run the regressions as ordered logistic regressions (separating effectiveness and efficiency), as part of robustness tests. But in our case I think we are already pretty safe taking averages.

The scale is numeric (1 to 6) in AQCs/IMRs, which means the gap between ratings is likely to be perceived by those filling them out as more or less equal. Ordinal scales become more problematic when they are based on questions where the response scale is in words, and the gap less clearly equal, such as: how much do you like…”a little bit” “quite a lot” “a lot”.

Also worth noting is that we are averaging across two variables (not taking the mean of one variable) and using the resulting variable, which is much closer to being continuous.

Thanks again

Terence

This pattern of project ratings is typical across DFAT, the World Bank, and the Asian Development Bank. The WB and ADB have both published various studies examining this issue. I’m not aware of any similar publications by DFAT but I would be surprised if they hadn’t undertaken a similar analysis. To my way of thinking the key issue is “How is DFAT using this information about project success/failure to drive continuous improvement in the Australian aid program?”.

Thank you everyone for some great comments.

Just quickly on the empirical side:

Edward you wrote: “In addition to the correlation between the abolition of ODE and the decline in ratings, I wonder whether there is also a correlation with initiatives that commenced shortly after the merger between AusAID and DFAT. If we assume that many Australian-funded initiatives are ~4 years, is it possible that initiatives commencing in 2014 or 2015, and getting rated from 2018 or 2019 onwards are also behind the decline in ratings? I’d love to see some analysis of that.”

Thank you, good suggestion, I will have a look when I get time. I suspect that the subset of projects that meet the criteria will be too small to allow for definitive analysis. But I could be wrong (I hope I am). It’s worth looking into.

Terence

Hi Stephen and team, very interesting to read this and thanks for sharing.

Two thoughts:

1. The World Bank has Implementation Status Reports which have ratings allocated by managers while a project is still operational. These ratings are the equivalent of the DFAT “ongoing” ratings, no? It would be interesting to aggregate a random sample of ISR ratings and compare them against the ratings given in the respective ICR and see whether the WB also has an optimism bias.

2. In addition to the correlation between the abolition of ODE and the decline in ratings, I wonder whether there is also a correlation with initiatives that commenced shortly after the merger between AusAID and DFAT. If we assume that many Australian-funded initiatives are ~4 years, is it possible that initiatives commencing in 2014 or 2015, and getting rated from 2018 or 2019 onwards are also behind the decline in ratings? I’d love to see some analysis of that.

Hi Ed,

Thanks for your comments.

On your first point, we do address this in our report: https://devpolicy.org/publications/reports/Howes-etal_Recent-trends-in-Australian-aid-performance_May2023.pdf

The disconnect between self-rated ICRs and more independent IEG assessments of completed World Bank projects is 15-18 percentage points in recent years (see footnote 18 of the report). Our estimate of the same disconnect in DFAT is 13 percentage points (p.21).

The optimism bias is even greater at the Bank because the IEG assessments are (even) more independent than the DFAT final assessments – that’s our interpretation.

Just wondering: are the levels of effectiveness and efficiency, confidence in the systems to monitor and respond to these, and communication about same related to the integration of the development cooperation program within DFAT?

I think your explanation is on the money. Given our new govt’s announcement of establishing an Evaluator General I wonder if DFAT will eventually move to rebuild its Office of Development Effectiveness and the independent oversight committee.

Scott Bayley raises an interesting point, about the intentions of the proposed Evaluator-General – which will be in Treasury. This probably reflects the personal enthusiasms of the Assistant Treasurer, Dr Andrew Leigh.

But it will not be located in the Department of Finance, which wrote the APS Guidelines for undertaking evaluation (Resource Management Guide 130).

The key question for all APS Secretaries will be how to cooperate with the Evaluator-General and really take “evaluation” seriously. Especially as DFAT didn’t, in the critical area of our aid programs by abolishing the Office of Development Effectiveness. Even if devolving it to the line program areas.

The secondary question is – what does DFAT management “do” with such a series of “large and growing disconnect between the assessments of ongoing and completed investments?” Under Section 15(1)(b) of the PGPA Act, Secretaries must govern their Departments in a way that “promotes the achievement of the purposes of the entity”.

Has the “promotion of achievement” instead driven these series of final ratings ?

There is a decided disconnect with this analysis: “In 2021 and 2022, 36 aid investments worth about one billion dollars were rated as unsatisfactory”.

Indeed. The ~$1b value of those unsatisfactory investments alone makes for eye-watering reading, when considering the budget cycle and likely ammunition needed to make the long-term case for building the ODA/GNI ratio.

DFAT is not doing itself any favours by burying this failure rate. Articulating and communicating a clear strategy for quality improvement, in both project prioritisation, co-design and implementation (along with staff capability development) might be a way to avert the naysayers.