ABC Rural recently reported that Linx Employment had agreed with the Department of Employment and Work Relations to leave the Pacific Australia Labour Mobility (PALM) scheme amid a worker mistreatment investigation affecting over 200 workers in Tasmania and Queensland. The allegation is that the firm “failed to provide workers with their legally contracted 30 hours per week”.

There is some confusion about the recent changes regarding minimum hours (see the guidelines for more details), so it’s worth summarising them here:

- From now until 31 December 2023, short-stay PALM workers (formerly Seasonal Worker Programme (SWP) workers) must be offered at least 30 hours per week averaged over their full placement.

- From January to June 2024, short-stay PALM workers must be offered at least 30 hours per week averaged over each four-week period, during their placement.

- From July 2024, short-stay PALM workers must be offered at least 30 hours per week, every week, during their placement.

- Long-stay PALM workers (formerly Pacific Labour Scheme (PLS) workers) must be offered full-time hours, and transition recruitments have until 1 October 2023 to do this.

This blog answers a simple question: how many hours does the average PALM worker get?

PALM workers work an average of 44.5 hours per week, well above the minimum 30 hours required. The median worker gets 40 hours per week in an average week. These numbers are based on the 1,407 PALM workers who were asked in the Pacific Labour Mobility Survey, “In the last 7 days, how many hours did you work?”

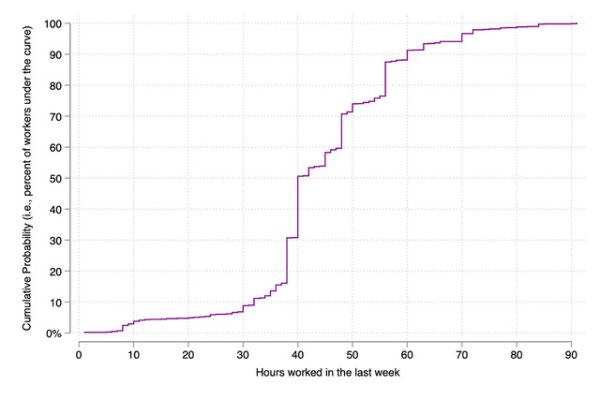

The full distribution of responses is plotted in Figure 1. The vertical axis is the proportion of respondents and the horizontal axis hours worked. As you move along the line, the graph tells you what proportion of workers reported working at least that many hours in the week before being surveyed. For example, the 40-hour point corresponds to the 50% (that is, the median) mark on the vertical axis. Less than 10% of surveyed workers reported working more than 60 hours, and about as many reported fewer than 30 hours. The spread here is the range you would expect since this question does not factor in whether people were sick or injured that week, whether mobility restrictions were in place, the season, or what part of the scheme people are in.

Figure 1: PALM workers’ weekly hours

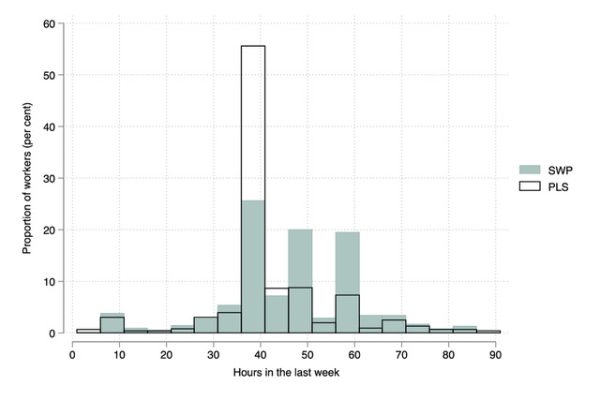

Figure 2 shows the full distribution of hours for short- (SWP) and long-stay (PLS) PALM workers separately. Notice three things. One, the mass of workers around the full-time week for the PLS, as you would expect since they are on full-time contracts. Two, to the right of this mass you see that SWP workers tend to work longer weeks than their PLS counterparts. Three, they are of similar shape on the bottom (left-hand side) end of the distribution: seasonal workers are only slightly more likely than full-time PLS workers to be getting a small number of hours in a representative week.

Figure 2: Weekly hours, SWP vs PLS

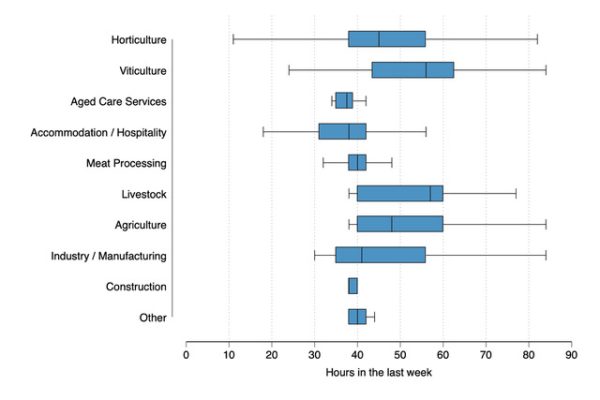

Figure 3 disaggregates the data by sector. The left and right sides of the boxes are the 25th and 75th percentiles. Key PLS sectors like meat processing and aged care are tightly centred on a full-time week. Ranges are larger in seasonal sectors. In every sector, over 75% of workers got more than 30 hours per week the week before they were surveyed.

Figure 3: Weekly hours by sector

To examine the possibility of a lack of hours more explicitly, we also asked workers “While in [host country] this trip, have you ever received less than 15 hours work in a week not by choice?” and “While in [host country] this trip, have you ever received zero hours work in a week not by choice?”

Under the prior arrangements for short-stay workers, the answer to these questions should be “Yes” for some workers some of the time. However, the frequency and extent are important, especially if workers have limited ability to smooth their expenditures.

Figure 4 plots the responses. Long-stay PLS workers provide an important baseline here. Since they are on full-time contracts, the levels for PLS workers are what you would expect the proportion of workers receiving zero or less than 15 hours to be in a situation of full-time employment or with less seasonality (that is, a natural rate that might be attributable to illness, COVID-19, or other things, such that comparing the short-stay seasonal workers to this level gives a crude picture of how much more prevalent these episodes are for the more volatile sectors). Almost a third of SWP workers had at least one week with less than 15 hours of work, compared to almost a quarter of long-stay PLS workers. SWP workers are 6 percentage points more likely to ever have a week with just 15 hours than their long-stay PLS counterparts, and only 3 percentage points more likely to report ever receiving zero hours.

Figure 4: Share of workers who ever had low hours

Employers who recruited seasonal workers before the recent changes will still have some workers who at some point in time receive fewer hours than the new arrangements allow, since they planned their workforces based on the old arrangements. However, during this period, most PALM workers received more than – many double, in fact – the minimum 30 hours per week.

The new arrangements shift the risk of low workloads from migrant workers to employers. This will likely affect hiring decisions, especially in highly seasonal sectors as PALM becomes a less seasonal worker program.

Bal.

A census covers everyone and a sample covers some share of that population, with a few to shine some light on the characteristics of the population.

A census on this matter does not exist and we conducted a survey. Indeed, a census on them would be a waste of valuable time and money and add little value beyond our extensive survey.

All potential steps were taken to make the sample as representative as possible, and this naturally becomes more the case as that gets bigger.

Bespoke microeconomic surveys often might cover just a few hundred, not thousands, which is more the realm of official surveys like the HIES. However, here, we are closer to those than a small survey.

Calling academics whose conclusions (here, facts, hard data) you don’t agree with “irresponsible” because you don’t understand the methods is incredibly poor practice, and usually an indication to not be taken seriously.

Best,

Ryan

Ryan, if the methods are as described in the article, then take that it is understandable as read to support the legitimacy of the analysis.

However, it does not negate the fundamental issue in that it is a roughly 4% sample. The issue or question raised is a critical one — workers, people and governments in the Pacific are concerned with it. For such an important issue, would you agree it would be responsible research for the paper to admit to some limitation in its analysis and provide some caveat eg surveyed sample may not be fully representative even if one is confident in their data?

Hi Bal,

Thanks for your comment again.

First, I should flag for readers that I understand that there are some people who do not like the results because they do not align with their own political or personal agendas, and are trying to discredit the legitimacy of the data, which frankly has had more checks and balances in its data collection than the vast majority of datasets in the region. (I will call it data, rather than analysis because that is more what I’ve shared so far. We are literally reporting the basic new information.) This type of behaviour, in my humble opinion, is anti-science, post-truth, disinformation spreading, and people deserve better. I trust that you can discern these agendas from those trying to understand the situation and use evidence in good faith, which is indeed what we are trying to offer here and a central part of our mission.

Caveats are offered where needed, but I am not sure that’s appropriate here. I’m giving you a plot of the raw data, describing who it is, and the main new fact is the average at the top. The median, also reported, is my preference though, as it is a measure of central tendency less sensitive to outliers or potential bias in the tails. What is presented above is a super rudimentary descriptive analysis, compared to the type of empirical work economists usually do, when if you read my other research there is no shortage of caveats and checks on the research designs.

A four per cent sample is large and puts this survey in very good stead compared to other data we use to think about critical issues. Take the last PNG HIES for example: 4, 191 households, out of a population of… well… I’ll let you work out that share given the recent controversy, but it’s much, less than four per cent. You can do a similar exercise for DHS, MICS, or any other survey, and you will see that 5 per cent is great, actually. There is a lot you can read up on the basics of sampling strategies should you be interested too, or I could happily provide some introductory texts.

Of course, a sample will not be “fully representative”, ever. This is an obvious fact; there is always some sampling error. (I’m happy to share what we estimate these to be from the survey design.) As a sample gets larger, this is usually reduced. More importantly here, our sample is not perfectly random. This was impossible in implementation, as we had to work hard to get contacts, lists, and so forth. At the same time, we don’t have any reason to suspect any major bias, and the generous number who responded openly certainly helps with this. That our quantitative findings align with large-scale qualitative work done at the same time with often more negatively selected workers and communities is also reassuring. Indeed, one has to have some pretty creative assumptions about the nature of sample selection to get to a substantially different interpretation of the data.

One potentially important type of selection is absconders, for example. We have them in the sample. But, naturally, some are not. To the extent that their response values are wildly different from the others (say, they’ve left because they got no hours) and you would want to include more of them as current scheme workers, then including more of them in your sample would add more “low” values and pull the average down. For it to move it enough to substantially alter the main descriptive findings, say to shift the medians, you might need to make some heroic assumptions though and this would be a different worker sample. Our frame was to try and get a sufficient (to do statistical analysis) number of from each country-scheme pair.

I hope that the above information helpful for you to understand it a bit better, and I’m happy to discuss further.

We need more surveys like this, as it is important to triangulate what evidence we can get and not just have one single reference. In the meantime, I hope that our new data is a helpful resource for researchers and policy analysts. This is the first survey to cover all three schemes and will be the first longitudinal data for the region once we do the second wave. It’s an important contribution to the Pacific data ecosystem, which we hope others are also eager to get the most out of.

We’ll be posting all the de-identified data and the documentation shortly for anyone to check, and use themselves, when we launch a major report using it shortly. I hope it leads to lots of productive downstream analysis and discussion like this. I wholeheartedly encourage any and all exercises and indeed criticisms comparing or leveraging other datasets to look into these concerns and better understand representativeness and any other reporting issues, as all data have strengths and weaknesses and it’s only from an intimate use of them that we really pick all these up over time.

Warmest wishes again,

Ryan

Interesting to see the conclusion here that workers get more than 30 hours, a conclusion based on the survey of a limited pool. As the paper admits, “These numbers are based on the 1,407 PALM workers who were asked in the Pacific Labour Mobility Survey”. Of the roughly 35000 PALM workers, that’s approx 4%. To make a conclusion as such based on that portion of surveyed workers is somewhat irresponsible as to the question of “how many minimum hours the workers get”.