When the Australian government cut aid last year, Australians didn’t exactly race to the barricades. In fact, many actually seemed quite happy. When we commissioned a survey question about the 2015-16 aid cuts, the majority of respondents supported them.

Since then, we’ve started studying what, if anything, might change Australians’ views about aid. There’s an obvious practical reason for this: helping campaigners. Yet the work is intellectually interesting too – a chance to learn more about what shapes humans’ (intermittent) impulse to aid distant strangers.

To study what might shift Australians’ attitudes to aid we used survey experiments designed to learn whether providing different types of information changes support for aid. To date we’ve run three separate experiments, each involving about a thousand people. In each experiment about half of the participants (the control group) was asked a simple question about whether Australia gives too much or too little aid – this question stayed the same in all three experiments. The control group were just asked this basic question, with no additional information.

In each experiment the other half of the participants (the treatment group) were asked an augmented question, which involved the basic question plus some information on Australian aid giving. People were randomly allocated to treatment and control groups, which means that (to simplify just a little) any overall difference between the responses of the treatment and control groups has to have been a result of the extra information the treatment group was given.

In the first experiment people in the treatment group were given information on how little aid Australia gives as a percentage of total federal spending. In the second experiment the treatment group was shown how Australian aid as a share of Gross National Income (GNI) has declined over time. In the third experiment declining Australian aid was contrasted with rapidly increasing aid levels in the UK. (The full wording of all the questions is here.)

Before you read on to the results think about which of the additional information contained in the different experiments you think would be most likely to change people’s minds.

For what it’s worth, I was confident that the information in Experiment 1, on just how little aid Australia gives, would shift opinion a lot. Last year, as we studied publicly available surveys on aid, my colleague Camilla Burkot found what I like to call the ‘aid ignorance effect’: people who don’t know how much aid Australia gives, or who overestimate how much is given, are more likely to favour aid cuts than their better-informed compatriots. This problem, I thought, could be easily cured by explaining how little Australia gives. I also thought that telling people about trends in Australian aid over time might be even more effective, by providing a frame of reference for what Australia could do: what it had done in the past. On the other hand I thought the comparison with the UK would add little: if there’s one thing I’ve learnt since moving to Australia, it’s that Australians are not interested in what the British do.

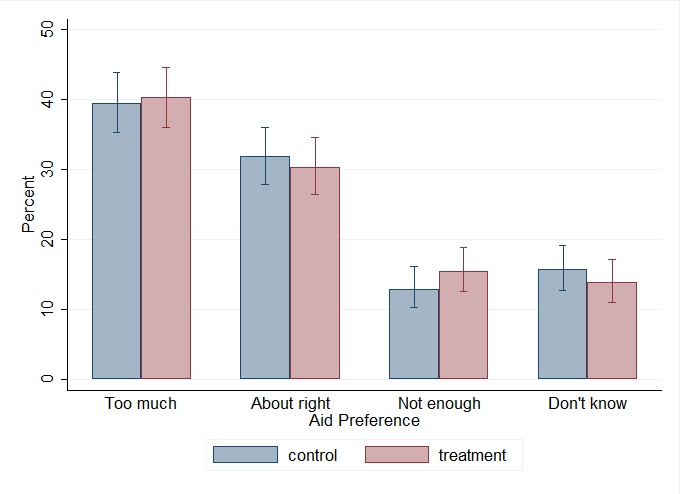

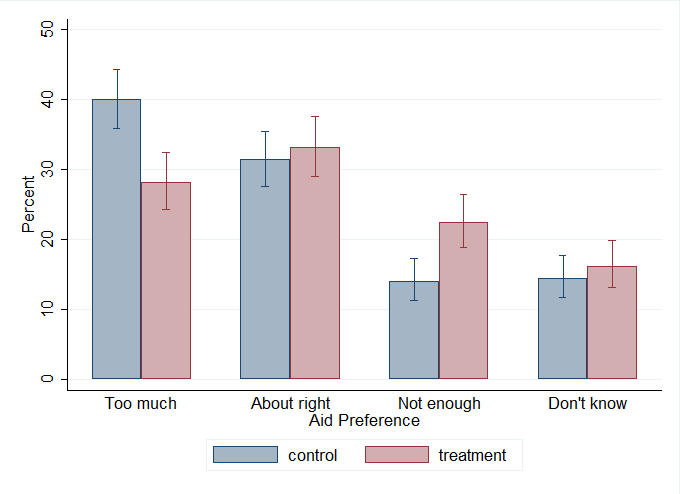

The charts show the results of the experiments. Blue bars show the percentage of control group respondents in each response category. Red bars do the same for the treatment group. If the treatment has had an effect the blue and red bars will differ considerably in their length.

Experiment 1 – telling people how little Australia gives

The first chart shows us that I was utterly wrong: telling people just how little aid Australia gives changed nothing. There was a slight increase in the proportion of respondents who thought Australia gave too little aid, but the change was very small, and wasn’t statistically significant. So much for curing the ignorance effect.

The first chart shows us that I was utterly wrong: telling people just how little aid Australia gives changed nothing. There was a slight increase in the proportion of respondents who thought Australia gave too little aid, but the change was very small, and wasn’t statistically significant. So much for curing the ignorance effect.

Experiment 2 – information on trends in Australian aid over time

Adding information on trends in Australian aid over time had a slightly larger impact, reducing the percentage of respondents who said Australia gives too much aid by about five percentage points. This change was quite close to being statistically significant once I corrected for an imbalance between the treatment and control groups. Even so, the magnitude of the effect was modest. Adding past giving as a frame of reference may help, but its impact isn’t huge.

Experiment 3 – comparisons with the UK

On the other hand, the impact of contrasting Australia to the UK is startling. Many more respondents thought Australia gave too little, and a lot fewer thought it gave too much.

On the other hand, the impact of contrasting Australia to the UK is startling. Many more respondents thought Australia gave too little, and a lot fewer thought it gave too much.

While the result clearly shows just how little I know about the Australian psyche, unfortunately it doesn’t – I think – give campaigners a magic bullet. The UK has not been a typical donor in the last decade. And if you start a debate contrasting Australia with the UK, you may find your opponents countering with examples of less generous countries, like New Zealand or the United States.

Taken together, however, the three experiments do provide some broader insights into how people think about aid volumes, and what might change their thinking. Specifically, information on its own is meaningless without a frame of reference, or point of contrast. I had thought that, in a country that has become less generous with time, Experiment 2 might have provided this, but actually – perhaps because Australians don’t want to be seen as bad international citizens – the most effective point of contrast appears to be with what other countries are doing.

Back in Old Blighty, no doubt, Queen Elizabeth would be heartened if she knew her unruly antipodean subjects could be influenced in this way. Here in Australia, however, the finding gives us an important new line of inquiry: learning more about what types of international comparisons work, and why.

[Update: I’ve now published a full Devpolicy discussion paper, which covers relevant literature and includes robustness testing. The results are the same. The paper is here.]

Terence Wood is a Research Fellow at the Development Policy Centre. His PhD focused on Solomon Islands electoral politics. He used to work for the New Zealand Government Aid Programme.

Terence and Camilla also presented this research at the 2016 Australasian Aid Conference – more details available here.

As a planning student in W.A who is currently undertaking a unit in International Development as an elective, I must say: to read about a quantifiable study that assesses international aid in Australia and Australia’s perception to aid, I found this article quite refreshing, albeit disappointing. Whilst I understand that Australia is still in a position that requires significant work on the home soil (and whilst I do not hold myself out to be an expert in Australian economics or finance), surely we as a nation can afford to better the development of others, especially those who are substantially worse off than we?! I also read Terence’s blog posted in 2015: What do Australians think about foreign aid? This was particularly eye-opening. To discover that the Australian federal government donates less than 1% of the federal budget was particularly boggling.

I also have to question people’s willingness to dig into their own back pockets. With 75% of Australian’s supporting the government’s decision to provide foreign aid (Burkot & Wood 2015), would they themselves actually donate? I doubt it. I expect only a small proportion of that 75% would be willing to open their own purses. Whilst I think providing Australia’s with the necessary information as to how much money is spent via Australian and British governments is a must, perhaps more information is required. I know that I would like to know where exactly the money is going, how many people it is likely to help, who it will help, that it will be for long term development purposes that will ultimately help the country stand on its own feet in the years to come, rather than just short term ‘fixes’.

Hi Monique,

Thank you for your comment. My rough estimate on the basis of the data I have is that about 10% (maybe as many as 15%) of voting age Australians donate to aid NGOs in any given year. The number would be higher, obviously, if we took a longer period, say once every 3 years.

If you want detailed info on Australian government aid spending please see the Devpolicy Australian Aid Tracker.

Also, ACFID have a great map of where their members (most Australian aid NGOs) work. I can’t find it at present (in haste as I’m travelling) but it is on their website somewhere.

Thanks again for your comment.

Terence

hi terence, very interesting study – like Weh my mind goes immediately to what is the substance that ppl think of when we talk about aid? just the volume of $ or do they think of the impact that is achieved by aid?if the questions presented impact data, showed progress as well as highlighted further inroads to be made, perhaps the response to too much/too little/just right might produce interesting findings?

thanks though for prompting such an interesting discussion

Thank you Pete,

Good comment. One way of thinking about this is that the fact the UK test did have an impact is pretty good evidence that beliefs on efficacy are not the sole constraint on support. Numbers and levels clearly have an effect, though only — apparently — in combination with more social/normative factors.

That said, we are also planning to test efficacy and the like. Stay tuned.

Terence

Hi Terence,

Interesting study! The results are in line with a mass of social psychological research that finds norms guide beliefs and behaviour above and beyond attitude persuasion (e.g., aid volume)

Norm perception can be derived from individual behaviour, information about the group, and institutional signals. Given that the institutional signaling in Australia is pretty dismal, I wonder if the most effective method of garnering support for increasing aid would be to highlight the number of in-group members (Australians) who support aid increases, rather than emphasising the majority who don’t?

Your previous opinion poll study showed 43% oppose the cuts- that’s (to extrapolate) over 10 million Australians who support aid increases! I wonder how this phrasing would change support compared to a control?

Here’s a great review article about norms and social change:

“Some interventions aimed at influencing norms simply present individuals with new summary information about the group, hoping to replace the individual’s personal and subjective representation with this summary information.” (p.189).

Would be interesting to see this approach applied to perceptions of aid.

Cheers,

Kylie

Thanks Kylie,

That is a great comment, with a really interesting suggestion. Resources permitting I would love to run that test. The article looks excellent too — thank you.

Terence

Hi Terence,

Not a problem. I actually had few extra thoughts after I posted:

– Changing norm perception isn’t just about numbers, but identification and legitimacy as well. A Coalition-voting audience (the most supportive of aid cuts in your opinion poll) might be more convinced by a petition to increase aid signed by 50 Coalition voters, than one signed by 50,000 Labor voters (or 500,000 Greens voters). A petition supported by an influential social referent for the Coalition voters (e.g., a Liberal politician) may add to the effect.

– Presenting descriptive information is often ineffective in changing attitudes, as it can appear to normalise the status quo, even more so if it suggests momentum. So I’m not too surprised your condition showing declining aid volume wasn’t convincing for participants (it may have actually been counterproductive if participants inferred declining normative support for aid in Australia along with those figures).

I’d be really interested to see any followup studies!

Cheers,

Kylie

Thanks again Kylie,

Two really good points. On the first, researchers in the UK have some provisional findings suggesting that normative communities are very important in shaping attitudes to development, which fits very nicely with what you’re saying here.

On the second, I like the idea of what we might call ‘momentum bias’. i.e. whereas I’d thought the trends would show people what could be done by giving a point of reference to the past, as I take it what you’re suggesting is that people see the trend and are affected by it (thinking, I guess, ‘there’s some good reason for it, so let’s keep going’). That said there seems no obvious evidence of “momentum bias” in the UK (aid is trending upwards, but people are still unhappy about this on average) (although of course any effect might be being masked by other effects).

Intriguingly, one point I didn’t cover in the blog was that the the trend information did quite dramatically decrease the proportion of Coalition voters who think the government gives too much aid. This is a finding I’m still puzzling. Although my guess is that the trend data showed Coalition supporters that the govt. had reversed the Rudd increases and therefore they were somewhat more sated in their desire to cut.

Thanks again for great comments.

Terence

Your title suggests you think the UK example has impact because of global norms and the idea of being a good global citizen. I’d like to think this, but wonder if experiment three simply highlights a desire to compete and be better than other countries, rather than any commitment to a global norm. (Although, arguably, it could be competition over achieving the global norm.) I’ll be interested to see what further inquiry shows. What it does highlight is how little we really know about how publics approach these issues of global citizenry, and what are the key factors driving or influencing their thinking.

Thanks Jo. My inclination is that it’s norm confirming not competition. (Would people really sacrifice their taxes just to say they were better than the British?) That said more research would be great for fleshing out the picture.

When World Vision surveyed Australians in 2009 we found that 30% of respondents wanted to be in the top 5 aid donors by volume and a total of 68% in the top 10. 63% agreed that “Australia should be a world leader in reducing global poverty”. I took this to mean that a majority of Australians wanted Australia to be amongst the leaders in aid but not necessarily out in front. This suggests to me less interest in competition and more basing our response on our relative wealth in the world.

Thanks Garth. That would be intriguing to test experimentally.

It’s great to see a methodical approach to these questions Terence. Can I suggest as well as testing other additional information in the questions (eg information about what aid has achieved) that Devpolicy also compares results from one-off questions in general surveys with questions in dedicated surveys on aid where a respondent has time to give more thought to the question of aid through a range of aid related questions.

I would also test online polling against telephone or in person polling. These online polling panels often receive payment for participating in surveys which may influence participation and results despite sample weighting corrections.

Thanks Garth,

You’ve made a couple of very good points.

On survey mode (the second point): you are correct that the populations that online survey companies sample from are potentially atypical in unobservable ways (i.e. ways that cannot be treated by weighting). That said,

(a) online surveys don’t involve self-selection, but use random sampling from a large ‘population’ of willing participants, which is not nearly as bad as samples constructed simply by self-selection.

(b) of course, the population may still be atypical in some unobserved sense.

(c) but this is only an issue for experiments if the unobservable difference is something that interacts with the treatment effect. (So not just the fact that participants in online surveys are different from Australians in general, but that they’re different in the extent to which they respond to treatment effects.) This is fairly unlikely.

(d) also, the regression analysis we’ve run using online data has produced very similar results to that we’ve run using data from the ANU poll (which is phone based).

(e) there’s a recognised issue with face to face surveys (covered by an English team of researchers in the aid conference) in so much as that respondents may give answers biased by their desire to seem nice to the person sitting across the other side of the room interviewing them (social desirability bias).

So your concern here is a technically correct, and definitely worth considering. But I don’t think it’s too much of an issue for our work at present. That said, we are planning to place a suite of (non-experimental) questions in the AuSSA, which is a gold-standard Australian postal poll. This will give us separate data from a different survey mode. Which will be a good check.

On your first point–once again it is a good point. There are limits to what we can do here because we have finite amounts of funding. However, that said we are going to try and conduct some experiments where respondents are presented with a richer, less artificial, suite of information before answering questions.

Good points, and thanks again for engaging on this.

Terence

Thanks,

That’s a great question.

We chose to look at aid volume-related questions because, as mentioned in the post, other research suggested that over-estimation of volumes was associated with lower support for aid. Seeking to know if we could correct this gave us question 1. From there is made sense to work following a theme and to add two different frames of reference (the past, and someone else). The UK comparison didn’t give us a groundswell but it has afforded an interesting insight into how volume related information works.

I totally agree there are other types of information we should experiment with. We are hoping to do this later this year.

Thanks again for a very good question.

Terence

Hi Terence

Thanks for this interesting insight. It’s great that you can compare three different methods and see the response to each. Unique opportunity.

I’m curious as to how you picked the 3 different methods to explain why Australia isn’t giving enough aid. It seems to me that they are all numbers based arguments. They also don’t explain to the public what aid actually is, or how it helps. Even the most successful of the 3 options only had about 23% of people thinking that Australia doesn’t give enough aid. Sure, that’s a big change from the control group, but hardly enough for a campaigner to feel like we’re creating a groundswell of support.

How did you come to the 3 different wordings that you provided?

Thanks heaps.